AI Cheating Escaped the Classroom. Now It’s in Job Interviews.

Here is a detail that should give every student pause.

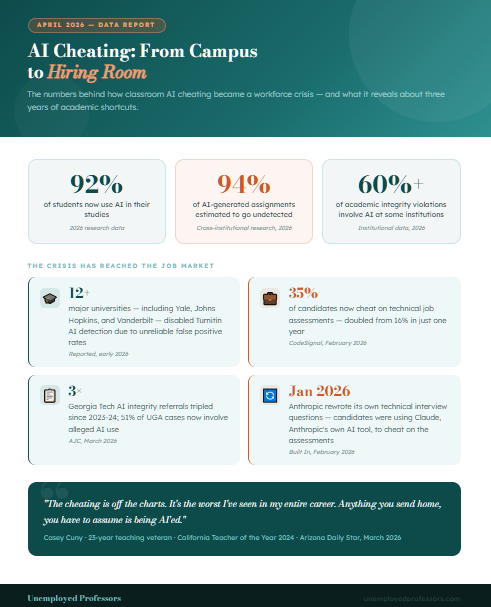

In January 2026, Anthropic — the company that makes Claude, one of the world’s most widely used AI tools — quietly admitted that they had to rewrite their technical interview questions. The reason: candidates were using Claude to cheat on their coding assessments.

Read that again. The people who built the AI being used to cheat had to change their own hiring process because the cheating had become unmanageable.

A month later, CodeSignal released data showing that cheating on technical job assessments had doubled in just one year — from 16 percent to 35 percent. One in three candidates is now cheating on the technical evaluations that determine whether they get hired.

And separately, last week, a 23-year California teaching veteran named Casey Cuny — who was named California’s Teacher of the Year in 2024 — told the Arizona Daily Star something that has been echoing through faculty lounges across the country: “The cheating is off the charts. It’s the worst I’ve seen in my entire career. Anything you send home, you have to assume is being AI’ed.”

These three data points, taken together, tell a story that higher education has been trying not to face clearly: the AI cheating that started in classrooms has not stayed there. It has followed students into their careers. And now everyone — employers, universities, and the students themselves — is dealing with the consequences.

How the Cheating Moved From Papers to Paychecks

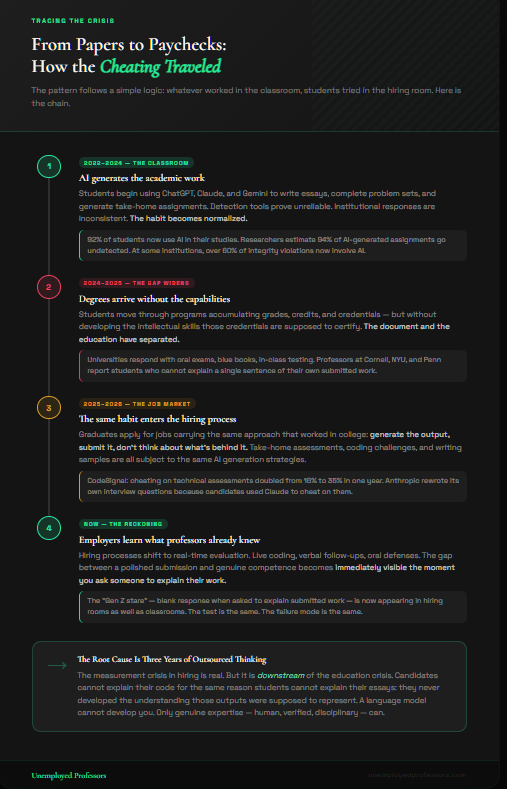

The pattern is not hard to follow once you see it laid out.

For three years, students in universities across the country have been using AI to generate their academic work. The scale is genuinely staggering. According to recent research, 92 percent of students now use AI in their studies. Some researchers estimate that 94 percent of AI-generated assignments are never detected at all. In some institutions, AI-related academic misconduct now accounts for more than 60 percent of all integrity violations.

The institutional response — detection tools, oral exams, blue book revivals, honor code updates — has been reactive and inconsistent. Faculty are, in the words of one professor at George Washington University, “incredibly frustrated” because the available detection tools cannot reliably catch the misconduct. Some universities have disabled Turnitin’s AI detection entirely because the false positive rate is too high to be trusted for consequential decisions.

The result is that a significant portion of students over the past three years have moved through their academic programs without developing the skills that academic writing and problem-solving are designed to build. They have documents — grades, transcripts, degrees — but not the capabilities those documents are supposed to certify.

And then they graduated. And started applying for jobs.

The cheating moved from papers to paychecks because the habit moved with the students. If using AI to complete a take-home assignment worked in college, why would a candidate not try the same approach on a take-home coding assessment? If generating a polished-sounding essay with zero original thinking worked for three years, why not apply the same approach to cover letters, writing samples, and interview preparation?

The answer, increasingly, is that employers are now doing what professors at Cornell and NYU and Penn have been doing for the past several months: they are asking follow-up questions. They are conducting verbal interviews after written assessments. They are designing evaluations that require candidates to demonstrate understanding in real time rather than produce a document.

And the blank stare that professors have been calling the “Gen Z stare” is showing up in hiring rooms too.

The Anthropic Problem Is Actually Everyone’s Problem

The story of Anthropic rewriting its interview questions is striking precisely because of the company involved. Anthropic is not a traditional employer struggling to understand AI. It is one of the most sophisticated AI research organizations in the world. Its employees quite literally build the tools being used to cheat on their own assessments.

And even Anthropic could not design interview questions resistant enough to prevent candidates from using Claude to game the process.

Built In, which reported on the story in February, made an observation worth sitting with: “Anthropic didn’t prove that candidates are cheaters. They proved that the test is broken.” The argument is that if coding ability is now something AI can simulate, testing for coding ability in isolation no longer measures what we want it to measure.

That is a thoughtful point about hiring practice. But it sidesteps a more uncomfortable truth about what has actually happened.

The reason technical interview questions need to be redesigned is not just that AI can generate code. It is that a growing number of candidates cannot explain the code they submit — because they did not write it and do not understand it. The cheating crisis in hiring is downstream of the cheating crisis in education. Candidates are using AI in interviews for the same reason students could not explain their essays in office hours: they have learned to produce outputs without developing the understanding that those outputs are supposed to represent.

The measurement crisis in hiring is real. But the root cause is an education crisis that three years of AI-generated academic work has been quietly building.

What Employers Are Now Learning What Professors Already Know

Casey Cuny’s quote — “Anything you send home, you have to assume is being AI’ed” — is not just a teacher’s observation about high school English assignments. It is now equally true for hiring managers evaluating take-home coding projects, writing samples, and analytical exercises.

Employers are arriving at the same conclusion professors reached over the past year: any asynchronous assignment that can be completed with AI will be. The quality of the submission tells you almost nothing about the capability of the person who submitted it.

The response in hiring, as in academia, has been a shift toward real-time evaluation. More technical interviews are being conducted live. More companies are adding verbal follow-up components to written assessments. More hiring processes are including discussions where candidates must explain, defend, and extend their submitted work.

This is, in structure, exactly what Cornell’s Chris Schaffer and NYU’s Panos Ipeirotis and Penn’s Emily Hammer arrived at through a different path. The solution to not being able to trust written work is to require people to demonstrate understanding in real time, in front of someone who actually knows the subject and can recognize the difference between genuine competence and algorithmic simulation.

The university and the hiring room are converging on the same problem and the same solution. What they are both discovering is that the gap between having a document and having an education is visible the moment you ask someone to speak rather than produce.

The Real Stakes for Students

Here is the part of this story that is not getting enough attention.

Students who used AI to generate their academic work for the past three years have not just risked academic integrity violations. They have arrived at graduation without the intellectual capabilities their degrees are supposed to certify. They cannot explain their essays. They cannot defend their analyses. They cannot extend their arguments under questioning. They handed three years of intellectual development off to a language model that cannot develop them, and now they are entering a job market that is rapidly designing evaluations to distinguish between genuine competence and AI-produced simulation.

The students who used AI as a crutch are not just at risk of being caught cheating on job assessments. They are at risk of discovering that they simply cannot do the work that their academic record suggests they can.

This is a harder problem to solve than a policy or a detection tool. You cannot update a syllabus to give someone back three years of intellectual formation they did not do. You cannot run their thinking through Turnitin and get their capability back.

What you can do — what there is still time to do, for students who are currently in their programs — is make different choices about how you get academic help.

The Difference That Matters

There is an important distinction buried in the current conversation about AI cheating that keeps getting missed.

The problem is not that students get help with their academic work. The problem is the kind of help they are getting.

When a student uses AI to generate their essay, paper, or problem set, they receive output. They do not receive an education. The AI does not understand the subject, cannot answer follow-up questions, and cannot transfer any capability to the student. Using it is a way of having a document without doing the thinking that the document is supposed to represent. And when the oral exam happens — or the job interview, or the first week of work — there is nothing there.

When a student works with a genuine human expert who actually understands the subject, the dynamic is entirely different. The expert produces work that reflects real scholarship in the field. The student can read it, engage with it, ask questions about it, compare it to their own thinking, and use it as a model of what genuine academic quality looks like in their discipline. They receive access to real expertise rather than statistical approximation.

This is the distinction that Unemployed Professors has been built on since 2010 — four years before ChatGPT, twelve years before the current crisis, sixteen years before the hiring room started looking like the classroom.

Our writers are not algorithms. They are human beings who actually know the subjects they write about — verified credentials, native English speakers, subject-matter experts who produce authentic scholarship grounded in genuine disciplinary understanding. When you receive work from Unemployed Professors, you receive a model of how a real expert thinks about your topic. You receive something you can engage with. Something you can learn from. Something you can, if you choose to, actually discuss.

That is the difference between getting help from a human expert and getting output from a machine. And it is a difference that the current crisis — in classrooms and in hiring rooms — is making impossible to ignore.

The Bottom Line

AI cheating has escaped the classroom. It is now documented in technical job assessments, architectural interviews, writing evaluations, and hiring processes across industries. The company that built one of the most widely used AI tools had to rewrite its own interview questions because the cheating had outrun the tests.

The institutions that are successfully navigating this crisis — in both education and hiring — are the ones that have moved away from trusting asynchronous written work and toward real-time demonstration of genuine understanding. They are asking people to explain, defend, and extend their thinking. They are discovering, repeatedly, that the gap between a polished document and actual competence is visible the moment you ask someone to speak.

Students who built their academic records on AI output are going to find that gap very visible, very soon.

Students who built their academic records on genuine human expertise — whether their own, developed through real engagement with the material, or that of a genuine subject-matter expert whose work they could learn from — are the ones who will have something to say when the follow-up questions come.

That is what Unemployed Professors has always provided. And it has never been more relevant than it is right now.