Two days ago, MIT published a Q&A with Agustín Rayo, the Dean of MIT’s School of Humanities, Arts, and Social Sciences. The piece ran as SHASS marked its 75th anniversary. It is one of the most direct statements a senior academic leader has made about what universities actually need to do in the age of AI — and it lands directly on the argument that higher education has been circling for three years without quite saying.

Asked why universities responding to AI by launching new technical programs or updating curricula are missing the point, Dean Rayo said this: the most important question universities need to ask is not how to adapt pedagogy to AI. The most important question is how to provide an education that brings real value to students in the age of AI.

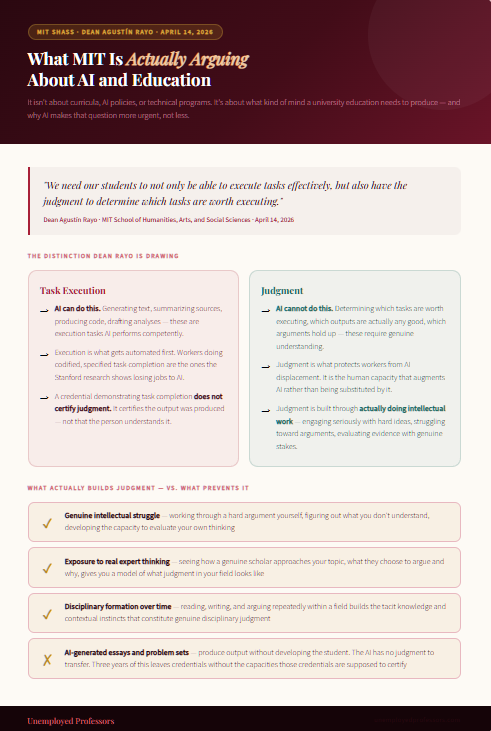

And then, more specifically: “We need to produce students with minds that are both nimble and broad. We need our students to not only be able to execute tasks effectively, but also have the judgment to determine which tasks are worth executing.”

That second sentence is the one that matters. Not the ability to execute tasks — AI can execute tasks. The judgment to determine which tasks are worth executing. That is a human capability. It requires genuine intellectual formation, not credential accumulation. It requires having actually done the thinking, not having submitted documents that simulate thought.

MIT’s Dean of Humanities is describing exactly the educational outcome that three years of AI-generated academic work has been systematically preventing.

What MIT Is Actually Saying

Dean Rayo’s argument is worth sitting with carefully, because it is both more radical and more obvious than it might initially appear.

Most institutional responses to AI in higher education have focused on one of two things: either trying to restrict AI use through detection and policy enforcement, or trying to integrate AI use more formally into pedagogy and curriculum. Both responses treat AI as the central variable — the thing to be managed, restricted, or harnessed.

Rayo is saying neither of those is the most important question. The most important question is what an education actually needs to produce in a human being. And his answer is not a set of AI-specific skills or AI-adjacent technical knowledge. His answer is judgment — the capacity to evaluate what is worth doing, to reason about which tasks have value and which can be delegated, to bring genuine human understanding to bear on questions that AI cannot adjudicate.

He put it directly: “Strengthening the humanities at MIT isn’t a departure from our core mission — it’s a way of ensuring that our technical leadership continues to matter in the world.”

This is MIT — the most technically oriented research university in the world — explicitly arguing that the response to AI is to double down on genuine humanistic intellectual formation. Not AI literacy. Not prompt engineering. Not updated technical curricula. Genuine intellectual breadth. Real judgment. Authentic human understanding of what matters and why.

The parallel to 1950 is worth noting. MIT founded SHASS in response to another moment of technological transformation — the nuclear age — when a faculty committee argued that MIT’s technical leadership would only matter if it was integrated with genuine humanistic understanding of the world those technologies were reshaping. Seventy-five years later, the Dean of that school is making the same argument about AI. The institution that produces the most technically sophisticated graduates in the world is arguing that the answer to the AI moment is more genuine humanistic education, not less.

The Problem MIT Isn’t Quite Saying Out Loud

Dean Rayo is right about what education needs to produce. The problem is that he is not quite saying the part that follows directly from his argument: the current state of AI use in university education is systematically preventing the development of the very qualities he is describing.

You cannot produce students with genuine judgment — the ability to determine which tasks are worth executing, to reason carefully about what has value and what does not — if those students have spent their undergraduate years using AI to generate the work that was supposed to develop those qualities.

The intellectual work of university education is not primarily about producing essays and problem sets. It is about developing the capacities that the process of producing essays and problem sets requires and builds: the ability to engage seriously with complex ideas, to struggle toward an argument, to evaluate evidence, to recognize what you do not understand and figure out how to address it. These are the capacities that constitute genuine judgment. They are built through genuinely doing the intellectual work, not through supervising AI systems that do it instead.

A student who has used ChatGPT to generate their essays for three years has not avoided the work of university education. They have avoided developing the capacities that the work of university education exists to build. They arrive at graduation — or at the job market, or at the situation Dean Rayo is imagining where they need to exercise judgment about which AI-generated outputs are worth using and which are not — without those capacities.

This connects directly to what the Stanford Digital Economy Lab found in research published last year: the workers most vulnerable to AI displacement are not those in particular fields, but those doing codified knowledge work — tasks that can be fully specified and automated. The workers who are not being displaced are those whose value comes from tacit knowledge, contextual judgment, and genuine disciplinary expertise that cannot be fully codified because it was built through years of real intellectual engagement.

Dean Rayo is describing what needs to be built. The uncomfortable corollary is that you cannot build it through three years of outsourcing intellectual work to language models.

The Gap Between What Gets Said and What Gets Done

There is a striking tension in how higher education is responding to this moment. The same week MIT’s humanities dean publishes a piece arguing that genuine judgment is the core educational outcome that AI makes more essential, Gallup and Lumina Foundation data shows that nearly half of college students have considered changing their major because they are anxious about AI’s impact on their job prospects — while simultaneously nine in ten students are confident their current education is preparing them for their futures.

That confidence sits uncomfortably alongside what the Gallup data also shows: 64 percent of students use AI daily or weekly to get help with coursework they do not understand, 60 percent use it to check homework answers, and more than half use it regularly to edit their writing. The education is supposed to be building judgment. The students are using AI to complete the assignments that are supposed to build it.

The institutions navigating this most honestly are the ones that have shifted toward assessment methods that require students to demonstrate genuine understanding in real time rather than produce polished documents asynchronously. The oral exam, the live discussion, the defended argument: these are methods that test whether the education actually produced what Dean Rayo says it needs to produce. But those methods are hard to scale, and most institutions are still relying primarily on written assignments that AI can generate at least as competently as exhausted undergraduates — and often more fluently.

The Part That Connects to How Students Get Help

Dean Rayo is not arguing against students getting help with their academic work. MIT has writing centers, tutoring programs, and a culture of intellectual collaboration. Getting help — from experts, from peers, from resources that model genuine scholarship — is part of education, not a departure from it.

What he is arguing against, in effect, is the substitution of AI output for the genuine intellectual work that develops judgment. The difference is between help that supports intellectual formation and help that replaces it.

This is precisely the distinction that Unemployed Professors is built on.

When a student uses AI to generate an essay, they receive output without understanding. The AI has no genuine judgment about the subject, cannot model what genuine intellectual engagement with the topic looks like, and cannot transfer anything to the student because it has nothing to transfer. The student receives a document that satisfies an assignment requirement while leaving them exactly as undeveloped as before.

When a student works with a genuine human expert — a verified scholar who actually knows the subject, has read the relevant literature, and has thought carefully about the specific topic — the dynamic is entirely different. The student receives work that reflects genuine intellectual engagement. They can read it, engage with it, ask questions about it, compare it to their own thinking, and use it as a model of what genuine scholarly judgment in their discipline actually looks like. The expert’s judgment is accessible to them in ways that AI output is not — because genuine human judgment is legible to other humans in ways that statistical text generation fundamentally is not.

Dean Rayo says students need to develop the judgment to determine which tasks are worth executing. That judgment is built through exposure to genuine expert thinking — seeing how a real scholar approaches a problem, evaluates evidence, and determines what matters. A language model cannot provide that exposure because it has no genuine judgment to model. A genuine human expert can.

This is what Unemployed Professors has provided since 2010: genuine human expertise from verified scholars who actually understand the subjects they write about. Not statistical approximation of academic prose. Not the simulation of judgment. Actual intellectual engagement with your specific topic, conducted by someone who has spent years developing the real thing.

The Bottom Line

MIT’s Dean of Humanities is right. The most important question universities face in the age of AI is not how to update their curricula or manage AI use. It is how to produce graduates with genuine judgment — minds broad enough and formed enough to determine which tasks are worth doing and why, and to bring real human understanding to questions that AI cannot adjudicate.

That kind of judgment is built through genuine intellectual work. It cannot be accumulated through three years of AI-generated credentials and then recovered later. It has to develop through the actual process of struggling with hard ideas, engaging seriously with expert thinking, and building real understanding over time.

For students navigating this environment, the relevant question is not whether to get help with academic work — it is what kind of help actually serves your genuine interests. Help that produces output without developing you leaves you exactly where Dean Rayo is worried you will end up: capable of executing tasks, but without the judgment to determine which ones are worth executing.

Help that gives you access to genuine human expertise — to real scholarly thinking about your specific subject that you can engage with and learn from — moves you in the other direction. It is the kind of help that supports the development MIT’s Dean of Humanities is calling for, rather than substituting something statistically plausible in its place.

Unemployed Professors has been providing that kind of help since 2010. Verified human scholars. Genuine disciplinary expertise. Real intellectual engagement with your work. Something you can actually learn from.