Nearly Half of Students Want to Change Their Major Because of AI. That’s the Wrong Answer.

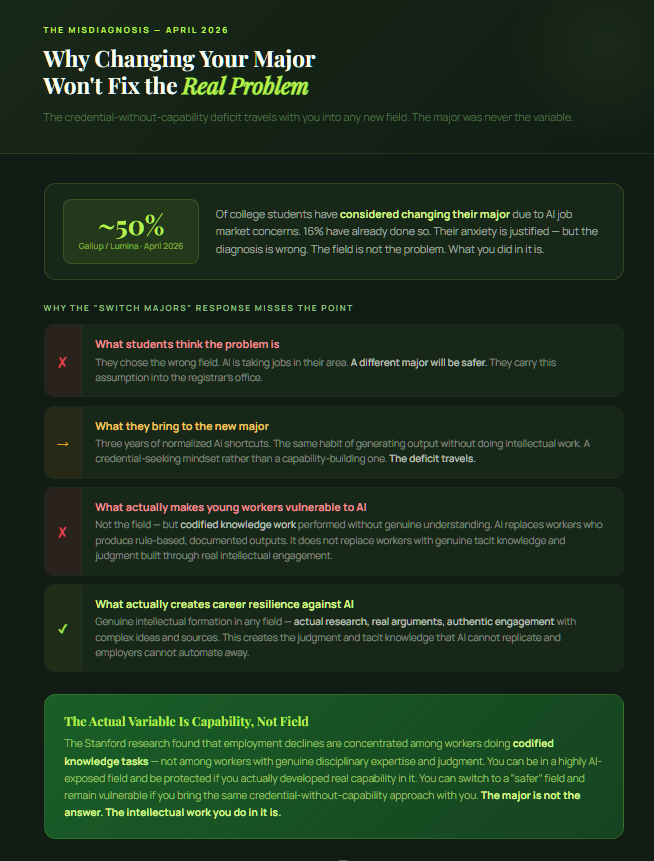

A Gallup and Lumina Foundation survey released this week found that nearly half of currently enrolled college students have considered changing their major because of concerns about AI’s impact on the job market. Sixteen percent have already done it.

That number is striking. It represents hundreds of thousands of students making consequential academic decisions in response to genuine, well-founded anxiety about what AI means for their professional futures.

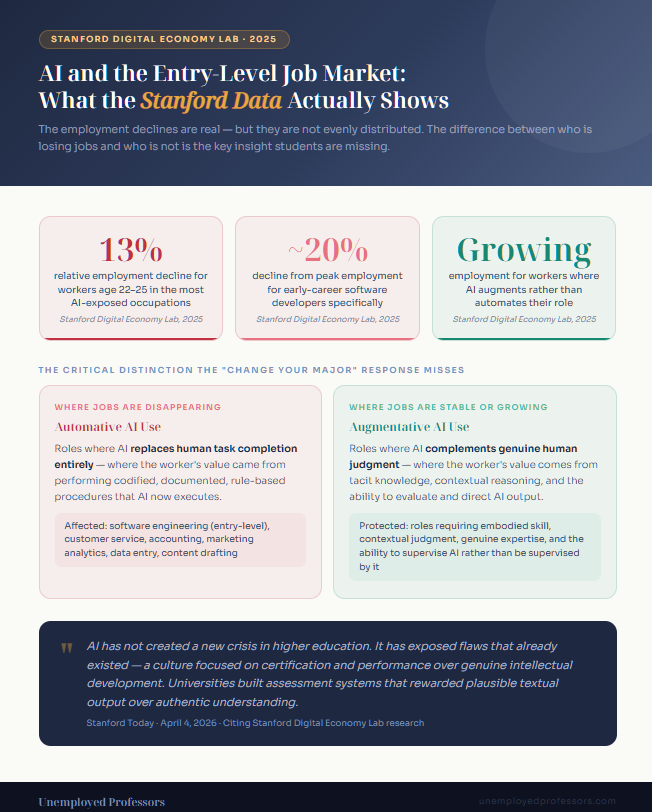

The anxiety is justified. A Stanford Digital Economy Lab working paper — now being cited in higher education circles as one of the most rigorous labor market analyses of the AI era — found that early-career workers aged 22 to 25 in the most AI-exposed occupations have experienced a 13 percent relative decline in employment since the widespread adoption of generative AI tools. For software developers specifically, the decline from peak employment in late 2022 is close to 20 percent.

The students reconsidering their fields are responding to something real. AI is eating entry-level jobs in the occupations most exposed to it. The traditional path from graduate to employed professional has narrowed significantly in less than three years.

But here is the problem with the answer of changing your major: the major is not the actual issue. And changing it will not fix what is actually wrong.

What the Stanford Research Actually Shows

The Stanford paper, led by economist Erik Brynjolfsson, is worth understanding carefully because it contains a distinction that the “just change your major” response completely misses.

The employment declines are not evenly distributed across AI-exposed occupations. The study found that declines are concentrated in jobs where AI is being used to automate work — to substitute for employee labor entirely. In contrast, employment is growing in occupations where AI is being used to augment workers — to complement human capability rather than replace it.

The difference between these two outcomes is not primarily a question of which field you study. It is a question of whether you bring genuine intellectual capability to your work or whether you bring a credential that certifies you completed coursework without developing the underlying thinking that coursework was designed to produce.

An employer who previously needed ten engineers and now needs two skilled engineers and an AI agent does not need the other eight because those eight were doing tasks that required codified knowledge — the kind of knowledge that AI can now retrieve and apply. What the two skilled engineers have that AI cannot replicate is judgment, tacit knowledge, disciplinary formation built through years of genuine engagement with hard problems. The ability to evaluate AI output rather than just produce it.

A Stanford article published this week put the point directly: AI has not created a new crisis in higher education. It has exposed flaws that already existed — specifically, a culture focused on certification and performance over genuine intellectual development. Universities built assessment systems that rewarded the production of plausible textual output over authentic understanding. Now that AI can produce plausible textual output on demand, those assessment systems are revealing what they never actually measured.

Students who spent three years producing AI-generated work to satisfy assessment requirements are not going to solve their employment problem by switching from computer science to nursing. They are going to carry the same deficit — credential without capability — into a new field.

The Actual Problem Is Not the Field. It’s What You Did in It.

The Gallup and Lumina survey found something else worth noting alongside the major-change data: about nine in ten college students are confident that their degree is teaching them career-relevant skills that will help them secure employment.

These two findings — half considering changing majors due to AI anxiety, and nine in ten confident their degree is building relevant skills — sit in uncomfortable tension with each other. Students are worried about the job market impact of AI. They are simultaneously confident their education is preparing them for it.

The Stanford employment research suggests at least some of that confidence may be misplaced — not because the fields are wrong, but because of how students are engaging with their education. Young workers who are vulnerable to AI displacement are overwhelmingly those doing codified knowledge work: tasks that are defined by formal rules, standard procedures, and documented processes. These are exactly the tasks that AI now performs competently.

The workers who are not being displaced are those whose value comes from something that cannot be codified: judgment developed through genuine experience, tacit knowledge built through repeated real engagement with complex problems, the ability to contextualize AI output within authentic disciplinary understanding.

What creates that judgment, that tacit knowledge, that authentic disciplinary understanding? The same thing that has always created it: actually doing the intellectual work. Reading the sources. Struggling through the argument. Writing the analysis yourself. Making mistakes and understanding why they were mistakes. Building genuine capability through genuine effort over time.

What does not create it: using ChatGPT to generate your essays, submitting AI output with minimal modification, paraphrasing AI-generated text to satisfy course requirements, or relying on any of the other shortcuts that have become normalized over the past three years.

The students most at risk from AI in the job market are not primarily those who studied the wrong subjects. They are those who studied any subject without developing the genuine intellectual capabilities that make a person irreplaceable rather than substitutable.

The Degree Problem Is Real. The Solution Is Not Easier.

Stanford Today cited a concern this week that is worth sitting with: as AI automates more entry-level professional tasks, the traditional path from university graduate to working professional is narrowing. Graduates are arriving in labor markets that previously absorbed entry-level workers into roles that provided on-the-job training, tacit knowledge transfer, and the gradual development of genuine professional competence — and finding those roles have been automated away.

The implications are troubling. Those entry-level roles were not just jobs. They were the mechanism through which the next generation of experienced workers was being built. If the junior developer positions disappear, who becomes the senior developers in ten years? If the entry-level analyst roles are automated, where does the next generation of senior analysts come from?

This is a structural problem that no individual student can solve by changing their major. It requires institutions, employers, and policymakers to rethink how early-career development actually works.

But there is something individual students can do right now, within their current programs, that will meaningfully improve their position relative to AI displacement: develop genuine intellectual capability rather than substituting AI output for it.

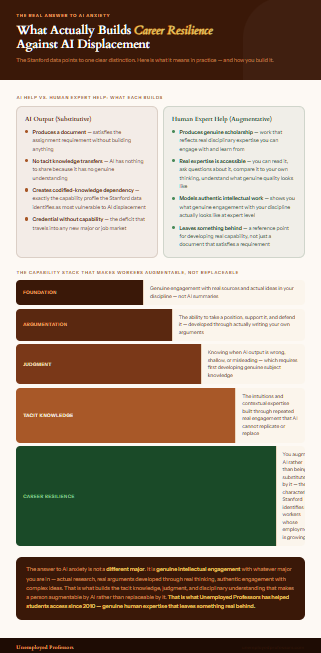

The Stanford data is explicit on this point. Workers whose roles involve augmentative AI use — where AI complements human judgment rather than replaces human task completion — have not experienced the employment declines hitting workers in purely automative AI roles. The difference is whether there is genuine human thinking in the equation or whether the human has become a conduit for AI output to reach an institutional destination.

Students who are actually developing judgment, building genuine understanding of their disciplines, and producing intellectual work that reflects real engagement with complex ideas are the ones who will have the tacit knowledge and authentic competence that AI cannot replicate. Students who have been using AI as a shortcut to produce academic credentials without the underlying intellectual work are the ones most exposed to the kind of displacement the Stanford research documents.

What This Means for How You Get Academic Help

There is an important distinction here that the current anxious conversation about AI and majors almost entirely misses.

Getting help with your academic work is not the problem. Students have always gotten help — tutors, writing centers, study groups, professional academic writers. The question is what kind of help actually develops you and what kind simply produces a document that satisfies a requirement without building anything.

AI help of the substitutive kind — using ChatGPT to generate your essays, having a language model complete your problem sets, relying on automated tools to do the intellectual work your assignments are designed to require — produces exactly the deficit the Stanford research identifies as making young workers vulnerable. You arrive at the job market with a credential that suggests you can do something, and an actual capability set that reveals you cannot.

Human expert help of the kind Unemployed Professors provides works differently. When a verified subject-matter expert with genuine disciplinary credentials writes an essay on your topic, that essay reflects real intellectual engagement with your subject. You receive something you can read, engage with, ask questions about, compare to your own thinking, and use as a model for understanding what genuine scholarly work in your discipline looks like. The expertise behind the work is real. That reality is accessible to you in ways that AI output is not.

This is a meaningful distinction, especially in an environment where the labor market is differentiating between people with genuine intellectual formation and people with credentials that do not certify that formation.

Unemployed Professors has been built on genuine human expertise since 2010 — verified scholars, real credentials, authentic disciplinary knowledge, and work that reflects actual thinking. Our writers are not algorithms producing statistically probable prose. They are human beings who actually know the subjects they write about, who conduct genuine research, and who produce work that represents real scholarship.

In an environment where AI is exposing the gap between certification and genuine intellectual formation, the difference between getting help from a real expert and getting output from a language model has never been more consequential.

The Bottom Line

Nearly half of college students are considering changing their major because they are afraid AI is going to take the jobs in their field.

They are responding to something real. The Stanford data confirms that entry-level employment in AI-exposed occupations has declined significantly. The anxiety is not irrational.

But the response is misdirected. The students most at risk from AI displacement are not primarily those who studied the wrong things. They are those who did not develop genuine intellectual capability while studying. And a student who has spent three years using AI to generate their academic work and submitting the output with minimal engagement is not going to become less vulnerable to AI displacement by taking a different set of courses that they approach the same way.

The answer to AI anxiety is not a different major. It is genuine intellectual engagement with whatever major you are in — actual research, real arguments developed through real thinking, authentic engagement with the ideas and sources and problems that your discipline requires.

That is what builds the tacit knowledge, judgment, and authentic disciplinary understanding that makes a person augmentable by AI rather than replaceable by it. That is what the Stanford research identifies as the characteristic of workers who are not being displaced. And that is what a university education is supposed to produce — when students actually do the work it requires.

When you need help with your academic work, the kind of help that serves your actual interests is human expertise rather than AI output. It gives you a model of real scholarship to engage with. It leaves something behind.