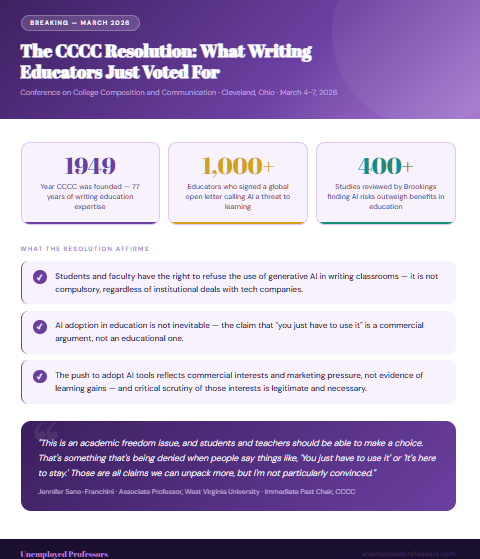

Earlier this month, at their annual convention in Cleveland, the Conference on College Composition and Communication did something that surprised a lot of people in higher education: they voted to say no.

Not a committee recommendation. Not a discussion paper. A formal resolution, overwhelmingly approved, affirming that students and faculty have the right to refuse the use of generative AI in writing classrooms.

The CCCC is not a fringe group. Founded in 1949, it is the world’s largest professional organization dedicated to the research and teaching of writing. These are the people who have spent their careers thinking harder about what writing is, what it does, and why it matters than virtually anyone else on the planet. When they pass a resolution like this, it is worth paying attention to what they are actually saying.

And what they are saying is something that we at Unemployed Professors have been saying since the beginning of the AI wave: the assumption that AI in academic writing is inevitable, necessary, and unavoidable is not a fact. It is a sales pitch.

“You Just Have to Use It.” Do You?

The framing that has dominated the AI-in-education conversation for the past three years goes something like this: AI is here, AI is everywhere, students need to use it to be competitive in the workforce, and anyone who resists is simply delaying the inevitable. Universities have signed multimillion-dollar deals with tech companies on the basis of exactly this argument.

Jennifer Sano-Franchini, an associate professor of English at West Virginia University and immediate past chair of the CCCC, addressed this framing directly in response to the resolution’s passage. “This is an academic freedom issue, and students and teachers should be able to make a choice,” she said. “That’s something that’s being denied when people say things like, ‘You just have to use it,’ ‘It’s here to stay,’ or ‘Students need to be able to use it for their careers.’ Those are all claims we can unpack more, but I’m not particularly convinced.”

That deserves to sit for a moment. The person who just led the world’s largest organization of writing educators is not particularly convinced by the inevitability argument. She is not alone.

Last summer, over 1,000 education professionals from universities across the globe signed an open letter describing AI in education as a threat to student learning and wellbeing, arguing that the push to adopt generative AI in classrooms is driven by a massive marketing campaign to position these products as essential — not by evidence that they actually produce learning gains.

The CCCC resolution did not say AI can never have a role in education. What it explicitly rejected was the premise that its adoption is unavoidable, and the pressure that premise places on students and faculty to participate in something they have substantive reasons to question.

What the Writing Experts Are Actually Seeing

The concerns the CCCC built into their resolution are not abstract. They cover data privacy, labor rights, environmental impact, academic freedom — and, most importantly for the students reading this, the development of critical thinking skills.

That last concern is the one that matters most in the context of academic writing. Writing educators have spent decades studying what the process of writing actually does to the people doing it. The act of writing is not a means of recording ideas you already have — it is a primary mechanism through which ideas develop. The struggle of finding the right argument, the right evidence, the right structure is not friction to be removed from the educational experience. It is the educational experience.

When a student uses AI to generate an essay, they are not saving time on a task that exists to produce a document. They are removing themselves from a process that exists to develop their ability to think. This is what the CCCC’s resolution is defending — not the essay as artifact, but writing as intellectual formation.

The concern is well supported by research. A Brookings Institute study published in early 2026, drawing on data from over 500 educators, parents, and students across 50 countries and more than 400 academic studies, found that the risks of AI in education currently outweigh the benefits. The researchers pointed specifically to cognitive offloading — the process by which relying on AI to do intellectual tasks atrophies the student’s own capacity to perform them — as a central concern. A separate Microsoft study reached similar conclusions about the relationship between AI use and critical thinking skills.

Writing educators see this playing out in classrooms every day. Students who have used AI to generate their essays can often talk fluently about the topic on the surface. Ask them to go one level deeper — to actually defend an argument, to explain how two sources relate to each other, to develop an original claim — and the absence of the underlying thinking is immediately apparent.

The Tech Company Push and Who Benefits

One of the more pointed aspects of the CCCC resolution is what it says, implicitly, about the commercial forces driving AI adoption in higher education. The resolution’s supporters were explicit about this: the inevitability narrative pushing universities to sign expensive deals with technology companies is not neutral description of reality. It is marketing.

Universities have entered multimillion-dollar partnerships with AI companies on the premise that students need these tools to succeed. The tech companies benefit enormously from these deals — not just financially, but because institutional adoption normalizes their products and creates the very market dependence they are promising to serve. Sonja Drimmer, an associate professor at the University of Massachusetts at Amherst who has written extensively about AI resistance in education, made this point plainly: focusing on the right to refuse directs appropriate scrutiny toward the commercial interests promoting inevitability narratives to prevent critical engagement.

Students are the ones who end up caught in this dynamic. They are told they must use AI tools, often through institutional mandates or implicit pressure, while simultaneously being told that using AI for their academic work is a violation of academic integrity. The mixed message is not an accident of policy implementation. It reflects a genuine tension between the commercial logic driving adoption and the educational logic that justifies the existence of academic institutions in the first place.

What This Means for Students Right Now

The CCCC resolution does not change what professors are allowed to do. Many institutions still use AI detection tools, many faculty still run submissions through detectors, and many students are still facing academic integrity proceedings on the basis of those tools’ outputs — outputs that are, as we have written previously, deeply unreliable.

What the resolution does is provide institutional backing for something that should have been obvious from the beginning: students and faculty who do not want AI in their academic writing have legitimate, well-reasoned grounds for that position, and those grounds deserve respect.

For students navigating this environment, the practical implications are significant.

First, the argument that you must use AI to stay competitive is not an established fact — it is a claim that even the leading experts in writing education find unconvincing. You do not owe your academic work to a technology company’s revenue model.

Second, the evidence is accumulating that using AI to write your essays does not just risk academic integrity violations. It actually makes you worse at thinking. The Brookings research, the Microsoft study, and the daily observations of writing educators all point in the same direction: cognitive offloading is real, and the cost is paid in the development of your own intellectual capabilities.

Third, if you are in a situation where you need help with academic writing that genuinely exceeds your current capabilities — a graduate-level assignment, a deadline you cannot meet, a subject that requires expertise you do not yet have — the solution that serves your actual interests is not AI generation. It is genuine human expertise.

The Human in the Loop Is Not Optional

This is precisely the argument that Unemployed Professors was built on, and it is the argument that the CCCC’s resolution validates at the highest level of academic authority.

AI does not understand your topic. It does not develop original arguments. It does not engage meaningfully with sources. It produces statistically probable word sequences that resemble academic writing in the same way that a sophisticated imitation of a painting resembles art — formally similar at a distance, fundamentally different under examination.

When writing educators say they are defending the right to refuse AI, they are not being nostalgic about typewriters. They are defending the proposition that writing is thinking, that thinking cannot be outsourced, and that a process which replaces a student’s intellectual work with a machine’s statistical approximation of that work has failed the student regardless of whether anyone detects it.

That is why at Unemployed Professors, we have never offered AI-generated writing and never will. Our model is built on the same conviction the CCCC just voted to formalize: that genuine scholarship requires genuine human expertise, and that there is no algorithmic substitute for a real scholar who actually knows their subject.

Every essay produced by Unemployed Professors is written by a verified human expert — someone with actual credentials in the relevant field, who conducts real research, develops original arguments, and writes with the authentic scholarly voice that comes from genuine disciplinary formation. Not AI output formatted to look like academic work. Actual scholarship from actual scholars.

The CCCC’s resolution is a vote for exactly that distinction. When the world’s leading writing educators say that AI cannot replace the human intellectual work at the center of academic writing, they are describing the same reality we have been built to address since 2010.

The Bottom Line

The vote in Cleveland was not just a professional organization registering a preference. It was the accumulated judgment of the people who understand academic writing most deeply, delivered formally and overwhelmingly, at a moment when universities are under significant commercial pressure to pretend that judgment doesn’t matter.

The students who will benefit most from understanding what that vote means are the ones who are being told — by institutions, by tech companies, by the ambient pressure of an AI-saturated environment — that they have no choice but to use these tools.

You have a choice. The CCCC just voted to say so.

And when you need work that reflects genuine human expertise rather than algorithmic simulation, Unemployed Professors is here to provide exactly that.

POST YOUR PROJECT today and work with a verified expert who actually knows your subject.